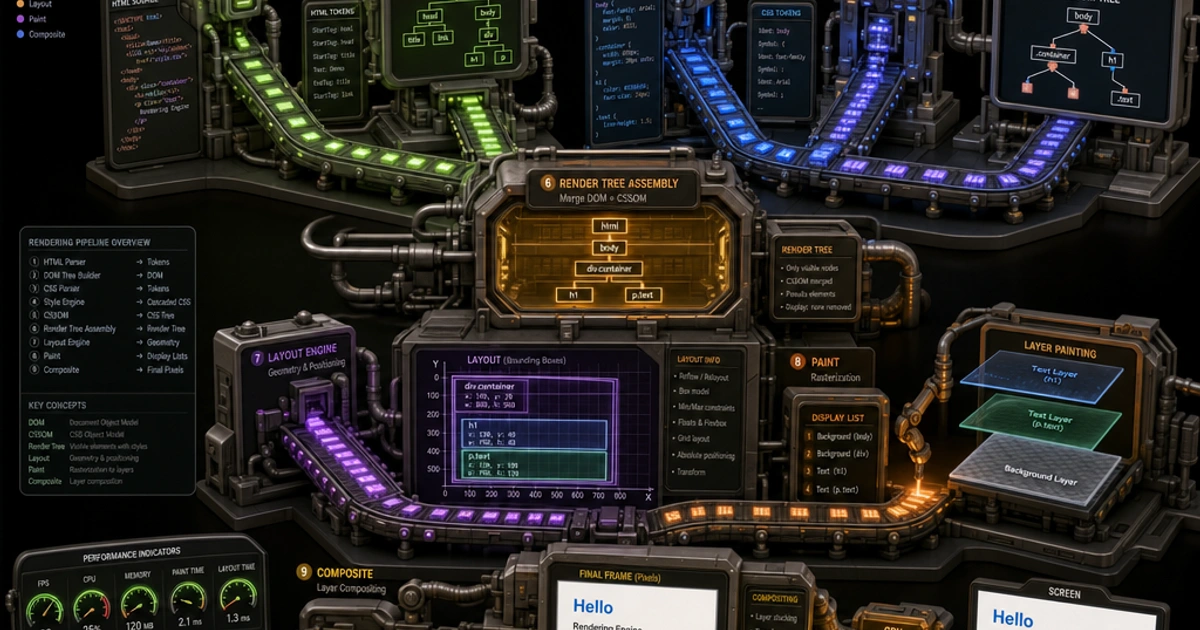

Every time a browser loads a page, it runs the same multi-stage pipeline to convert bytes on the network into pixels on screen. Most developers know pieces of it — the DOM, the CSSOM, reflow — but not how they connect. Once you understand the full sequence, performance decisions stop feeling arbitrary and start making obvious sense.

flowchart LR HTML[HTML bytes] --> DOM[Parse -> DOM] CSS[CSS bytes] --> CSSOM[Parse -> CSSOM] DOM --> RT[Render tree<br/>visible nodes only] CSSOM --> RT RT --> LAY[Layout<br/>compute geometry] LAY --> PT[Paint<br/>fill pixels per layer] PT --> COMP[Composite<br/>GPU blends layers] COMP --> SCREEN[Pixels on screen]

Step 1: Parsing HTML → the DOM

The browser receives raw HTML bytes and converts them into a Document Object Model — a tree of nodes. Each HTML element becomes a node, with parent-child relationships that mirror the nesting in your markup.

Parsing happens incrementally. The browser doesn't wait for the entire HTML to download before it starts building the DOM. It processes the document as bytes arrive, which means the order of elements in your HTML has real performance implications.

The parser is also fault-tolerant. It handles malformed HTML according to the HTML parsing specification — closing unclosed tags, inferring missing elements, and continuing rather than erroring. This is why broken HTML usually renders anyway.

Step 2: Parsing CSS → the CSSOM

While building the DOM, the browser also parses CSS into a CSS Object Model — a separate tree of style rules. Every <link rel="stylesheet"> triggers a fetch; <style> blocks are parsed inline.

CSS is render-blocking by default. The browser won't proceed to the rendering steps until all CSS referenced in the <head> has downloaded and parsed — see web.dev's render-blocking resources guide for the mechanics. The reasoning is sound: you don't want to show unstyled content and then re-render it with styles — that's jarring and causes layout shifts.

This is why you should load only the CSS you need for the initial view, and defer the rest. It's also why inlining critical CSS is a common optimization (more on this in Understanding the Critical Rendering Path).

Step 3: Combining into the Render Tree

The browser combines the DOM and CSSOM to produce the render tree — a tree that contains only visible nodes, each annotated with their computed styles.

Elements with display: none are excluded from the render tree. visibility: hidden elements are included (they take up space, just aren't painted). ::before and ::after pseudo-elements are added even though they don't exist in the DOM.

At this stage, every node has its computed styles resolved — all cascading, inheritance, and variable resolution is complete.

Step 4: Layout (Computing Geometry)

With the render tree in hand, the browser runs layout (also called "reflow"). This calculates the exact position and size of every element on the page, expressed as pixel values.

Layout works top-down through the render tree. The browser considers:

- The viewport size

- The box model (width, height, padding, border, margin)

- Positioning (static, relative, absolute, fixed, sticky)

- Float and flex/grid layout algorithms

- Text wrapping

This is computationally expensive. A single layout operation has to resolve geometry for potentially thousands of elements. And layout is often triggered multiple times — once during initial load, and again whenever something changes size or position.

Step 5: Paint

After layout, the browser knows where every pixel should go. Paint fills those pixels with the actual visual content: text, colors, borders, images, box shadows. MDN's "Critical rendering path" article walks through every step at the spec level.

Painting is broken down into layers. Elements that trigger a new stacking context (via transform, opacity, filter, will-change, position: fixed, etc.) get their own compositor layer. Layers can be painted independently and then combined, which is what makes certain animations cheap.

Not all CSS properties trigger a full repaint. Properties like color and background-color require repaint. Properties like transform and opacity can often be handled entirely on the GPU without touching the paint layer.

Step 6: Compositing

The final step combines the painted layers into the final image you see on screen. This happens on the GPU. If you animate transform or opacity, the browser can hand that work to the GPU compositor, completely skipping layout and paint on subsequent frames.

This is why transform: translateX() is preferable to left: for animations. Both move an element, but left: triggers layout → paint → composite on every frame. transform skips straight to composite — the GPU just moves a pre-painted layer. The difference is the boundary between janky and smooth at 60fps; CSS Triggers (documented at MDN performance docs) lists which properties trigger which stages.

What Causes Reflow

Reflow is triggered any time the geometry of the page changes. Common causes:

- Adding or removing DOM nodes

- Changing an element's size or position via CSS (width, height, padding, margin, top, left)

- Changing font size

- Resizing the viewport

- Reading certain layout properties from JavaScript

That last one is important. Reading offsetWidth, offsetHeight, getBoundingClientRect(), scrollTop, and similar properties forces the browser to flush any pending layout changes to return an accurate value. If you alternate reading and writing layout properties in a loop, you trigger what's called layout thrashing:

// Bad: read-write-read-write forces layout on every iteration

elements.forEach(el => {

const width = el.offsetWidth; // forces layout

el.style.width = width * 2 + 'px'; // marks layout dirty

});

// Better: batch reads, then batch writes

const widths = elements.map(el => el.offsetWidth); // one layout flush

elements.forEach((el, i) => { el.style.width = widths[i] * 2 + 'px'; });What Causes Repaint

Repaint happens when visual properties change without affecting geometry. Changing color, background-color, border-color, outline, or box-shadow on an element triggers a repaint without a reflow. It's cheaper, but still work the browser has to do.

How JavaScript Blocks Parsing

By default, a <script> tag halts HTML parsing. The parser stops, the script downloads, executes, and only then does parsing resume. This is because JavaScript can modify the DOM — document.write() can insert arbitrary HTML into the parse stream.

sequenceDiagram participant Parser participant JS participant DOM Note over Parser: synchronous script Parser->>JS: encounter script tag Parser->>Parser: pause parsing JS->>JS: download + execute JS->>DOM: optional document.write JS-->>Parser: resume Note over Parser: defer script Parser->>JS: encounter script tag Parser->>JS: download in parallel Parser->>DOM: continue parsing Parser-->>JS: parse done, run scripts in order Note over Parser: async script Parser->>JS: encounter script tag Parser->>JS: download in parallel Parser->>DOM: continue parsing JS-->>Parser: execute when ready (any order)

The fix is two attributes:

<!-- defer: downloads in parallel, executes after HTML is parsed -->

<script src="app.js" defer></script>

<!-- async: downloads in parallel, executes as soon as it's ready -->

<script src="analytics.js" async></script>defer maintains execution order and runs after parsing. It's the right choice for application code that depends on the DOM. async executes whenever the download completes — order is not guaranteed. It's the right choice for independent scripts like analytics.

Both eliminate the render-blocking penalty of a synchronous <script> in <head>.

Using DevTools to See the Pipeline

The Chrome DevTools Performance tab shows you the entire rendering pipeline for a page load. Recording a page load will show you:

- Parse HTML — how long DOM construction took

- Recalculate Style — CSSOM construction and cascade resolution

- Layout — geometry calculation

- Paint — pixel filling

- Composite Layers — GPU compositing

Long bars in Layout or Paint are your performance bottlenecks. Look for "forced reflow" warnings — those appear when JavaScript triggered a synchronous layout calculation.

Why This Matters for Your Code

Understanding this pipeline makes performance decisions logical rather than arbitrary. Minified HTML and CSS parse faster — the CSS Minifier and HTML Formatter help keep source tight. Deferring JavaScript keeps the parser unblocked. Reserving space for images (with width and height attributes) avoids reflows after content loads. Animating transform and opacity instead of positional properties keeps animations off the main thread.

The rendering pipeline is deterministic. Once you know the steps, you can predict what a code change will cost.

For a practical guide to applying these concepts to page load performance, Understanding the Critical Rendering Path covers the optimization techniques that follow directly from this pipeline. And for guidance on how web fonts interact with the paint step, Web Fonts Performance explains why font loading strategy matters to perceived load time.

FAQ

Why is `transform` faster than `top`/`left` for animations?

transform runs entirely on the compositor layer (GPU), bypassing layout and paint. Animating top or left triggers layout → paint → composite on every frame because the element's geometry changes in document flow. On a 60fps animation, that's 16ms per frame budget; layout alone can eat 5–10ms on a complex page. Transform-based animations often fit comfortably under the budget where top animations cause jank.

What's the difference between layout, paint, and compositing in performance terms?

Layout is the most expensive — typically 5–20ms for a moderate page, scaling with element count. Paint is moderate — 1–5ms per layer. Compositing is cheap — usually under 1ms because it's GPU work that just blends pre-rendered layers. Optimization strategies follow this hierarchy: avoid layout if you can, prefer paint over layout, prefer compositing over paint.

How does Speculation Rules API change the pipeline?

The Speculation Rules API (Chrome 121+) lets you tell the browser to prerender entire pages in the background — running the full pipeline (DOM, CSSOM, layout, paint) for a hidden navigation. When the user clicks the link, the page is already painted and just becomes visible. This is more aggressive than <link rel="prefetch"> (which only fetches the bytes) and produces near-instant navigations. Brave and other Chromium browsers also support it.

When should I use `will-change`?

Sparingly, and right before the change happens — not as a permanent declaration. will-change: transform tells the browser to promote the element to its own compositor layer so transforms are GPU-cheap. But every promoted layer costs memory, so applying will-change to every element actually hurts performance. The right pattern is to add it just before an animation starts and remove it after the animation finishes.

What's the cost of forcing a synchronous layout?

About 5–20ms on a typical page, depending on DOM complexity. The browser has to flush any pending style/layout changes to give you an accurate value from offsetWidth, getBoundingClientRect(), etc. If you alternate reads and writes in a loop ("layout thrashing"), each iteration triggers a flush — a 100-element loop can add 500–2000ms of main-thread work. Always batch reads first, then writes.

Why doesn't `display: none` trigger reflow when you'd expect?

Setting display: none actually does trigger reflow once — to remove the element from the layout. But the element is then excluded from future reflows entirely. Toggling display is more expensive than toggling visibility: hidden (which keeps the element in flow but doesn't paint it) for one-shot show/hide. For animations, neither is a good choice — use opacity + transform for performant transitions.

Does HTML streaming help with the rendering pipeline?

Yes — significantly. The browser's parser is incremental, so it can build the DOM and start painting before the full HTML response has arrived. Server-side frameworks like React 19, SolidStart, and Astro stream HTML in chunks so above-the-fold content renders while below-the-fold work is still happening on the server. This dramatically improves Time to First Byte → First Contentful Paint latency.

What changed about the rendering pipeline in modern browsers?

The pipeline conceptually is the same, but implementations have gotten more parallel. Chrome's RenderingNG architecture (2021+) does parts of layout and paint on background threads. Off-main-thread compositing is universal. The Houdini APIs (Paint Worklet, Layout Worklet, Animation Worklet) let developers extend specific stages with custom code. The biggest change for developers: less work blocks the main thread, but understanding when work hits the main thread is still the key to fast pages.